Loading...

Back to Blog

Back to Blog Dan Malone

Dan Malone

How I Built a Manifest-Driven Video Engine with Remotion and ElevenLabs

February 6, 2026

11 min read

View Source Code

github.com/Danm72/video-engine

Connect on LinkedIn

linkedin.com/in/d-malone

Follow on Twitter/X

x.com/danmalone_mawla

Share this article

TL;DR

I wanted a repeatable way to ship short launch videos without the usual editing chaos. The core move was manifest-first: JSON scenes, deterministic rendering in Remotion, generated audio from ElevenLabs, and a quality review loop before publish.

How I Built a Manifest-Driven Video Engine with Remotion and ElevenLabs

I kept hitting the same wall.

Text posts were grand. Audio had a clean pipeline. Images were sorted. But short launch videos were still a mess: screen recordings, ad-hoc edits, random music picks, and no way to reproduce the result next week.

I'm not gonna lie to you, I burned way too many hours pretending this could be solved with "just one more manual edit." It couldn't.

So I built a dedicated video-engine repo and treated video like code.

- Define scenes as data.

- Generate or reuse audio.

- Render deterministically.

- Review against quality targets.

Inspiration tweet: Thariq's original post on X.

Why a Separate Engine

I tested a few approaches. Template-only was too rigid. Conversational scene building sounded cool but was painful to replay exactly.

Manifest-driven won because it gave me control and replayability.

| Approach | What Broke | Why I Dropped It |

|---|---|---|

| Manual editing | Slow, inconsistent | Fine for one-offs, painful for pipeline work |

| Prompt-only generation | Hard to audit | Too much hidden state and fuzzy diffs |

| Manifest-driven | More upfront design | Best tradeoff for repeatable output |

project context -> manifest.json -> audio generation -> Remotion render -> review report

The Core Stack

None of this is exotic on its own. The value is how the pieces fit together.

- Remotion for frame-driven React rendering

- TransitionSeries for scene transitions

- Zod for manifest validation

- ElevenLabs Sound Effects API for SFX

- ElevenLabs Music API for background tracks

- parseMedia for duration checks

The strict rule in this engine: no CSS timeline animations. Motion comes from useCurrentFrame(), spring(), and interpolate() so renders stay deterministic and debuggable.

Manifest-First, Not Component-First

The manifest is the source of truth. Components are just the renderer.

{

"meta": { "title": "My Demo", "fps": 30, "width": 1920, "height": 1080 },

"theme": { "bgColor": "#0a0a0a", "accentColor": "#D57456" },

"music": { "prompt": "warm uplifting lo-fi", "durationMs": 35000 },

"scenes": [

{ "id": "intro", "type": "split-intro", "durationMs": 3200 },

{ "id": "focus", "type": "camera-sequence", "durationMs": 5200 },

{ "id": "proof", "type": "animated-card", "durationMs": 5000 }

]

}This gave me three quick wins:

- Scene review got easier because timing and transitions were explicit.

- Regeneration became cheap: edit JSON, rerender, compare.

- Validation caught bad scene types and missing assets before long renders.

Video Demo: Reference vs Recreation

1) Reference Video (what we are recreating)

- Original post: Thariq reference post on X

2) Recreated Version (final output)

- Final render version:

twitter-insights-v11.mp4

What the Quality Gap Actually Looked Like

The first renders looked rough. Flat motion. Clipped text. Contrast issues on light cards. Springs that felt like they were stuck in mud.

So I did a frame-level comparison against the reference videos and forced myself to set hard targets instead of guessing.

| Dimension | Target |

|---|---|

| Base background | #0a0a0a |

| Accent color | #D57456 |

| Video length | 30-40s |

| Frame rate | 30fps |

| Transition timing | ~350-800ms |

| Music RMS | -36dB to -38dB |

Then I tackled fixes in phases: clipping and contrast first, spring tuning second, transition and scene-type polish after that. Less glamorous, way more effective.

Audio-First Was the Right Call

I originally planned visuals first. That was a mistake, full stop.

Switching to audio-first made timing sane again:

- Parse manifest for SFX/music needs.

- Generate or reuse cached audio via prompt hash.

- Measure real durations.

- Update manifest timing.

- Render with known assets.

That removed a lot of the "why is this transition late?" debugging spiral.

| Layer | Typical Usage | Est. Cost |

|---|---|---|

| SFX | 5-8 cues | $0.30-$0.50 |

| Music | 1 track | $0.10-$0.20 |

| Total per video | 30-40s clip | $0.40-$0.70 |

The Review Loop That Made It Usable

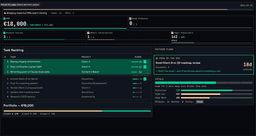

The turning point was building a single review command after render.

Before this, I was basically squinting at frames and arguing with myself. After this, I got a concrete report:

- Audio profile and mood checks

- Visual structure checks

- Comparison scorecard vs reference

- PASS/WARN/FAIL with next actions

python3 scripts/review-render.py out/video.mp4 \

--reference data/reference-profiles/v1-insights.json \

--output out/reviews/video-review.md

What I Learned (The Hard Way)

- Determinism beats convenience for production content.

- A manifest is not overhead, it's your safety rail.

- Quality criteria need numbers, not vibes.

- Audio timing quietly breaks everything if you ignore it.

- Review automation matters before adding more scene types.

Also: if animation feels dead, check spring damping before rewriting half your scene library. Ask me how I know.

What Comes Next

- Better templates by video archetype

- Cleaner social format variants (

1:1,9:16) - Tighter

/content-videointegration in the full content pipeline - Stronger visual regression checks

It remains to be seen how far I can push full automation without losing quality, but this is already a massive step up from manual editing roulette.

The Bottom Line: Treat video generation like software engineering, and it stops being a creative bottleneck. The manifest becomes your spec, rendering becomes your compiler, and review scripts become your QA loop.

References

Explore the Full Code

Star the repo to stay updated

Let's Connect

Follow for more smart home content

Follow on Twitter/X

x.com/danmalone_mawla

Tags

video-engine

remotion

elevenlabs

content-pipeline

ai

Related Articles

Continue reading similar content

Every Monday I Type /mission — 9 Claude Code Skills That Run My Business

I built 9 Claude Code skills to keep my one-person portfolio business oriented around goals, KPIs, and the actions that actually move them. Here's how the whole system works.

12 min read

Live Notifications in Home Assistant: The YAML That Got 760 Upvotes

How to build Android progress notifications for appliances and doors using smart plugs, power monitoring, and a few lines of YAML. No smart appliances required.

10 min read

I Built Mission Control to Run My Business. Now I'm Building It for Yours.

I built five AI agents to run my business. Everyone who saw it asked the same thing: how do I get this? So I turned Mission Control into a service.

14 min read