Loading...

Back to Blog

Back to Blog Dan Malone

Dan Malone

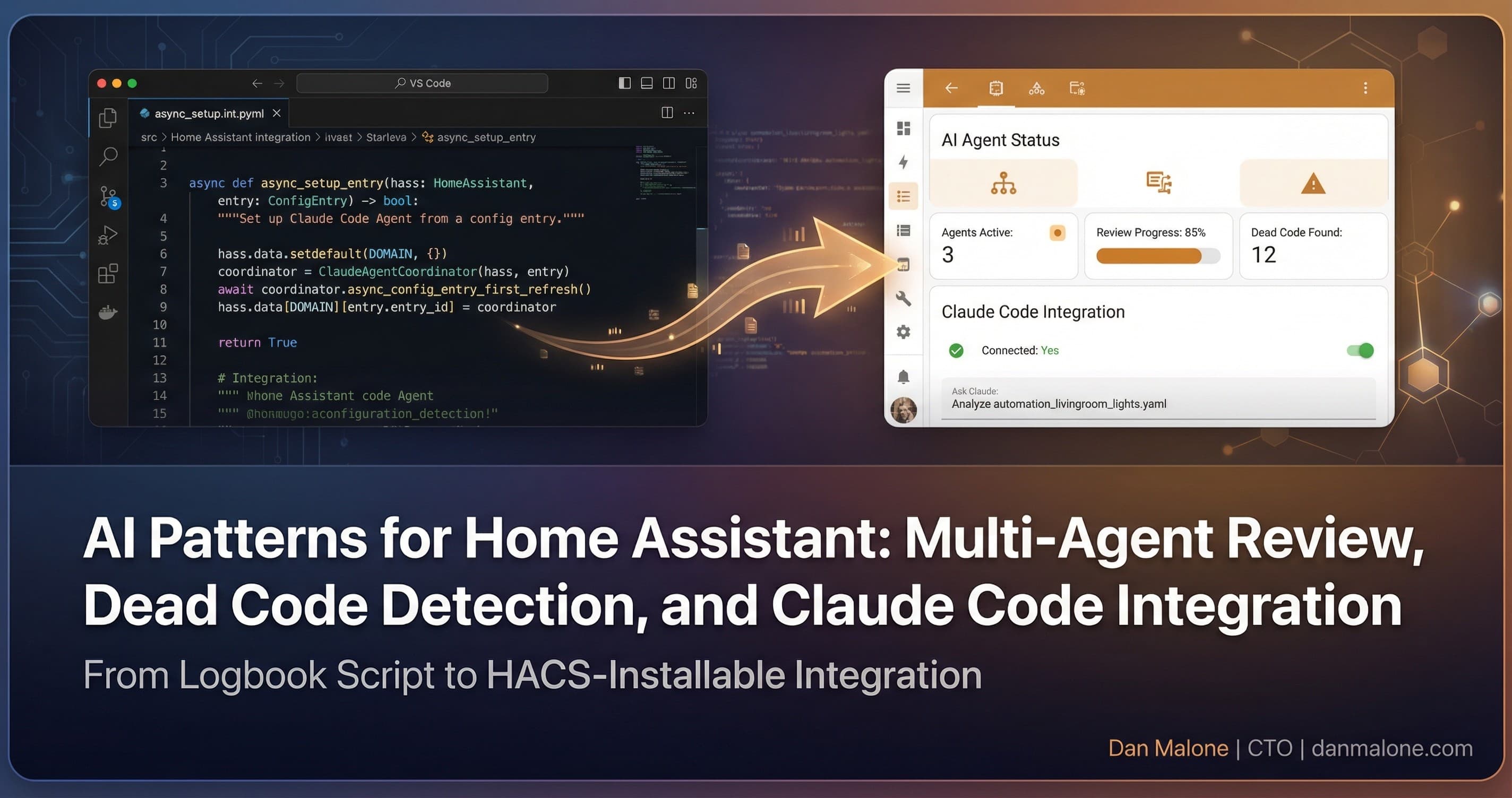

AI Patterns for Home Assistant: Multi-Agent Review, Dead Code Detection, and Claude Code Integration

January 26, 2026

5 min read

View Source Code

github.com/Danm72/ha-dashboard-2026

Connect on LinkedIn

linkedin.com/in/d-malone

Follow on Twitter/X

x.com/danmalone_mawla

Share this article

So, I've got about 1800 lines of Home Assistant YAML config that's accumulated over the years. Every time something breaks at 2am, I add another automation. Every time I get a new smart device, more YAML. And honestly? I had no idea what half of it was actually doing anymore.

That's when I started experimenting with using Claude Code and multi-agent patterns to wrangle this mess. Here's what I learned.

The Problem: Configuration Chaos

Like, if you've been running Home Assistant for more than a year, you probably know what I'm talking about. You've got:

- Automations from that one time the motion sensor was being weird

- Scripts you wrote for a device you don't even own anymore

- Duplicate automations with slightly different triggers because you forgot the first one existed

I had 47 automations and 12 scripts. But which ones were actually running? No idea.

Multi-Agent Code Review

Here's the thing - I could read through all 1800 lines manually. But that sounds awful, right? So I tried something different: running multiple specialized AI agents in parallel, each looking at the config from a different angle.

I set up five agents:

- Security Sentinel - looking for exposed credentials or risky configurations

- Architecture Strategist - checking for structural issues and patterns

- Pattern Recognition - finding duplicates and inconsistencies

- Code Simplicity - flagging over-engineered automations

- Data Integrity - checking for entity mismatches

Running them in parallel took about 20 minutes. Manual review would've been... I don't even want to think about it.

What the Agents Found

The results were actually pretty interesting. They found:

- Three automations with the same ID (which means only one was actually running)

- Eight automations that hadn't triggered in over 12 months

- One automation for my kitchen blinds that was 636 lines long. Six hundred and thirty-six lines for blinds.

- Entity name mismatches where my templates expected

light.kitchen_lightsbut the actual entity waslight.kitchen_z2m

The security agent flagged some stuff too, but I'll be honest - I kinda deprioritized that for convenience. It's a home automation system, not a bank.

Finding Dead Code

So I wanted to validate what the agents found about dead automations. Turns out Home Assistant keeps execution traces in the .storage directory. There's a file called core.restore_state that has last_triggered timestamps for everything.

A bit of jq magic later:

jq -r '.data[] | select(.state.entity_id | test("^automation\\."))' core.restore_stateAnd yeah, the agents were right. Three automations had literally never triggered. Ever. And eight more were dead for over a year.

The Tooling Setup

Getting Claude Code connected to my Home Assistant instance was its own journey. I looked at a few options:

- ha-mcp: 82 tools for entity control, automation management, history queries. This is the main workhorse.

- claude-skill-homeassistant: Higher-level workflow patterns for deployment and management.

- hass-cli: Command-line tool, useful but limited.

The winning combo was ha-mcp for the low-level API access plus custom skills for the workflow patterns. They complement each other - MCP gives you the API, skills give you the patterns.

One gotcha: you need long-lived access tokens for MCP authentication, not OAuth. Took me longer than I'd like to admit to figure that out.

What I'd Do Differently

Looking back, there's a few things:

- Run agent reviews regularly, not just when things are broken. Maybe quarterly.

- Set up entity naming conventions before you have 200 entities with random names.

- Delete aggressively. If an automation hasn't run in 6 months, it's probably not needed.

The multi-agent pattern isn't just for code review though. I'm now using it for planning changes, validating configs before deployment, that sort of stuff.

The Takeaway

If you've got a large Home Assistant config, you're probably carrying a lot of dead weight. The combination of AI agents for review and execution trace analysis can help you figure out what's actually being used.

Is it perfect? Nah. The agents sometimes flag stuff that's fine, and they miss things a human would catch. But it's a lot better than reading 1800 lines of YAML manually.

Let's be honest, nobody wants to do that.

Explore the Full Code

Star the repo to stay updated

Let's Connect

Follow for more smart home content

Follow on Twitter/X

x.com/danmalone_mawla

Tags

home-assistant

ai-patterns

claude-code

automation

Related Articles

Continue reading similar content

Every Monday I Type /mission — 9 Claude Code Skills That Run My Business

I built 9 Claude Code skills to keep my one-person portfolio business oriented around goals, KPIs, and the actions that actually move them. Here's how the whole system works.

12 min read

Live Notifications in Home Assistant: The YAML That Got 760 Upvotes

How to build Android progress notifications for appliances and doors using smart plugs, power monitoring, and a few lines of YAML. No smart appliances required.

10 min read

I Built Mission Control to Run My Business. Now I'm Building It for Yours.

I built five AI agents to run my business. Everyone who saw it asked the same thing: how do I get this? So I turned Mission Control into a service.

14 min read